Projects

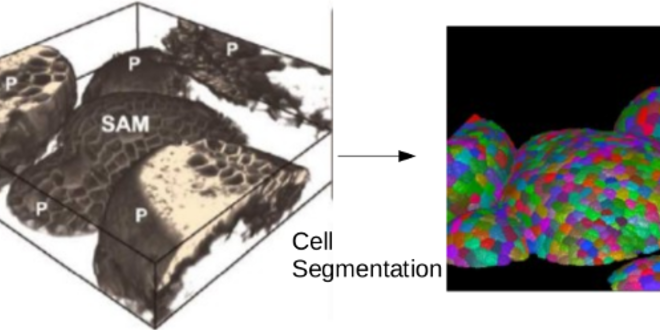

SAMSAM: Segmentation for Analysis and Measurements in the Shoot Apical Meristem

The shoot apical meristem is a network of cells located at the top of plants responsible for all above ground plant growth. Since most of what humans eat, such as grains and fruits, is derived from the shoot, biologists around the world investigate the regulatory mechanisms that drive a healthy growth of the meristem and the crops that depend on it. Computer simulations done on phantom models with idealized cell shapes, sizes, and connectivity have recently shown how transport of proteins and hormones responsible for growth in the shoot can be modeled to generate patterns observed in the wet lab. It is desirable, however, to move to more realistic models derived directly from real data. This type of modeling requires the segmentation of hundreds of cells in 3D confocal microscopy images, for posterior cell lineage tracking using time-lapse imaging. Fully automatic segmentation leads to errors that may be propagated to cell lineage tracking if not fixed. Hence, user-assisted object segmentation must be performed to ensure high accuracy, which calls for robust methods that minimize the required amount of user intervention to prevent mistakes caused by weariness and carelessness. This project aims to investigate solutions that integrate in an effective and efficient way automatic image segmentation and interactive correction.

Team:

Alexandre CunhaAlexandre Xavier Falcão

Elliot Meyerowitz

Thiago Vallin Spina